Enterprise leaders are deploying AI agents at an accelerating pace. The appeal is obvious: agents that can automate complex, multi-step business processes across departments, systems, and data sources. But as agent architectures evolve from single-purpose assistants to inter-connected Agent Meshes orchestrating work across entire organizations, one question moves to the top of every CISO’s list: Who is allowed to do what, and how do we enforce it?

The answer is not simple, because enterprise agent security has two distinct dimensions that must work in concert: inbound authorization and outbound access control.

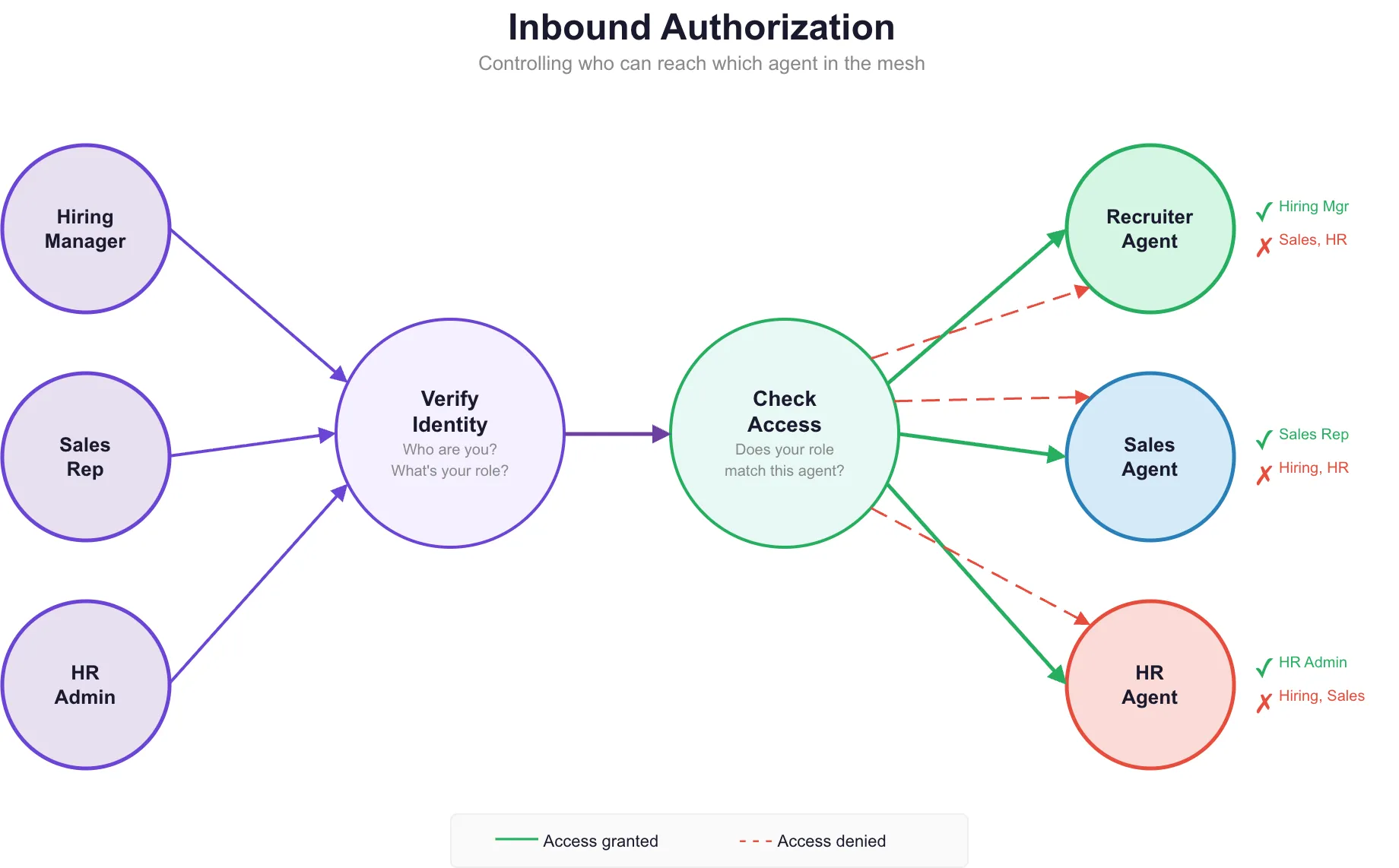

Inbound Authorization: Controlling Who Can Reach the Agent

Consider a typical enterprise Agent Mesh. A single workflow might orchestrate an HR Agent that accesses employee records and benefits data, a Sales Agent that queries CRM pipelines and revenue forecasts, and a Recruiter Agent that searches candidate databases and scheduling systems. These agents operate within the same mesh, but the users invoking them have vastly different roles and clearance levels.

A hiring manager should be able to interact with the Recruiter Agent but must never see compensation data surfaced by the HR Agent. A sales representative needs pipeline visibility but has no business accessing employee PII. Without inbound authorization, every user who can reach the mesh can reach every agent and every data source behind it. That is not a theoretical risk. It is a compliance violation waiting to happen.

Inbound authorization ensures that user identity, group membership, and role-based permissions are validated before any agent processes a request. The mesh enforces these boundaries at both the node level, determining which agents a user can invoke, and at the tool level, determining which data sources and actions each agent can execute on behalf of that specific user.

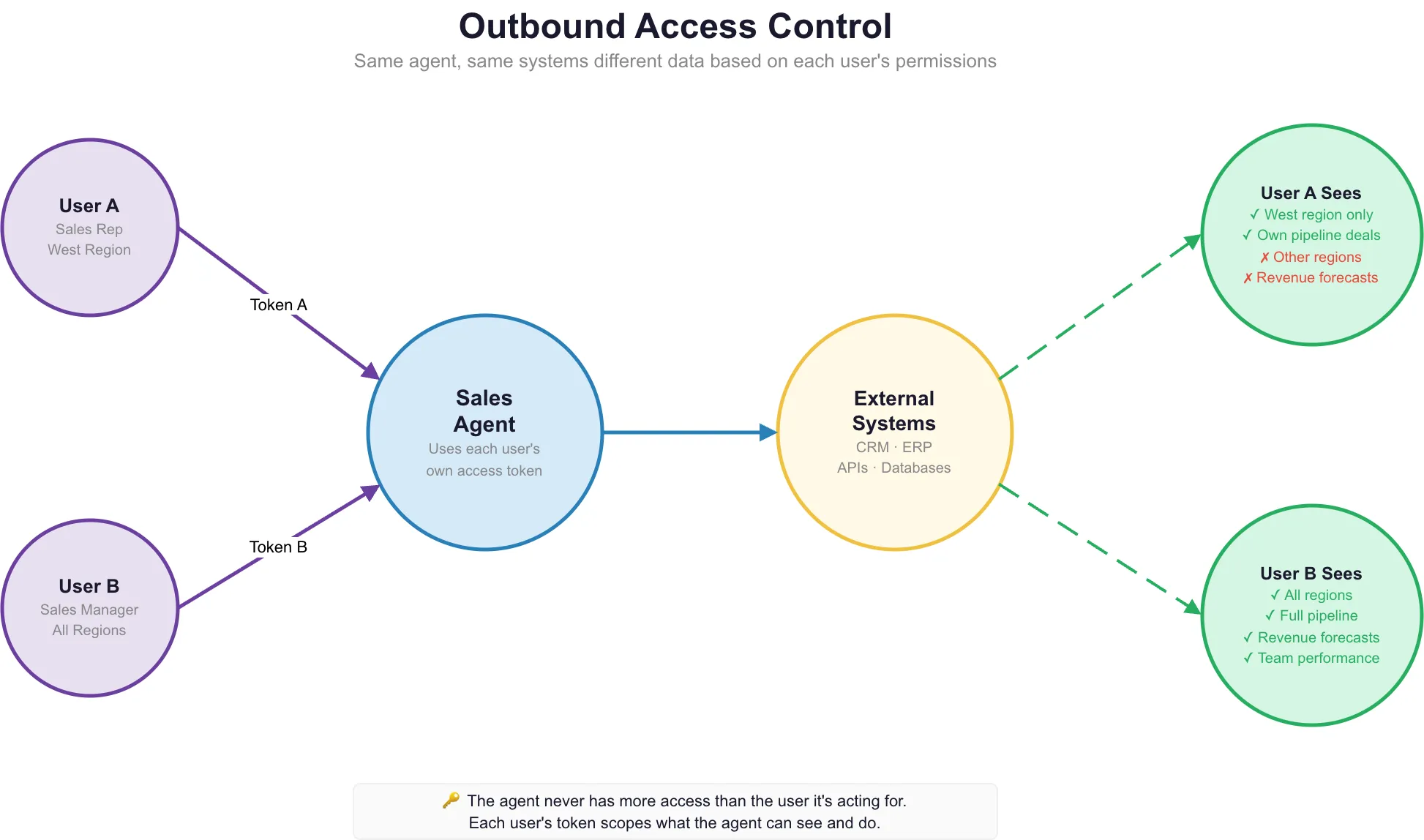

Outbound Access Control: Securing What the Agent Can Touch

The second dimension is equally critical. When an agent reaches out to external systems, APIs, databases, or third-party services, it acts on behalf of the user. Outbound access control ensures that the agent’s external actions are bound by the user’s actual permissions, not the agent’s technical capabilities.

Without outbound security, an agent with broad tool access could inadvertently expose data the user is not authorized to see, write to systems the user cannot access directly, or trigger actions that violate the user’s permission scope. In regulated industries such as finance, healthcare, and government, this kind of access leakage is not just a security incident. It is a regulatory event.

Effective agent security requires both dimensions working together: inbound authorization to verify who the user is and what they can reach, and outbound access control to ensure the agent’s downstream actions respect those same boundaries. Neither alone is sufficient.

Use Cases: Where Agent Security Becomes Business-Critical

Agent security is not an abstract infrastructure concern. It directly enables and constrains what enterprises can safely automate. The following use cases illustrate where inbound and outbound authorization become essential.

-

Multi-Department Agent Mesh. A manufacturing enterprise deploys an Agent Mesh spanning procurement, logistics, and finance. Procurement analysts can query supplier pricing through the Procurement Agent but must be blocked from accessing financial forecasts managed by the Finance Agent. Each agent enforces role-based access at the node level, and outbound API calls to ERP systems carry the invoking user’s permission tokens.

-

HR and Payroll Automation. An HR Agent processes benefits inquiries and onboarding workflows. Employees can ask about their own benefits, but only HR administrators can access compensation benchmarks and termination workflows. Inbound group-based authorization restricts which tools within the HR Agent respond to each user class.

-

Sales Intelligence with CRM Integration. A Sales Agent connects to Salesforce and HubSpot via OAuth2. Each salesperson’s agent session uses their individual OAuth2 token, ensuring CRM queries return only the accounts and opportunities they own. Outbound access control prevents any single agent session from accessing the full CRM dataset.

-

Healthcare Data Processing. A clinical agent assists with patient record summarization and insurance pre-authorization. HIPAA compliance demands that the agent only surfaces patient data to authorized clinicians. Inbound JWT validation confirms clinician identity, and outbound API calls to the EHR system carry scoped access tokens tied to the clinician’s privileges.

-

Financial Due Diligence Workflows. A Deep Agent orchestrates due diligence across financial filings, legal documents, and market data. Analysts have read access, but only senior partners can trigger the agent’s write-back actions that flag findings in the deal management system. Human-in-the-loop gates enforce approval at critical decision points.

-

Government and Public Sector Compliance. A public sector Agent Mesh processes citizen requests, routing between eligibility verification, document generation, and case management agents. FedRAMP and NIST compliance require per-user audit logging of every authorization decision, every data access, and every escalation to a human reviewer.

-

Recruiter Agent with Candidate Data Isolation. A Recruiter Agent accesses applicant tracking systems and interview scheduling tools. Hiring managers see candidates for their open roles only. Recruiters see a broader pool but cannot access offer-stage compensation data. Outbound tool calls enforce candidate data isolation based on the user’s organizational scope.

Karini AI Agent Security: Architecture and Features

Karini AI has built agent security into the platform from the ground up. This is not a bolt-on compliance layer. It is an integral part of how agents are constructed, deployed, and executed. Every capability described below lives in the Karini AI platform and is designed for the constraints of real enterprise environments.

Architectural Overview

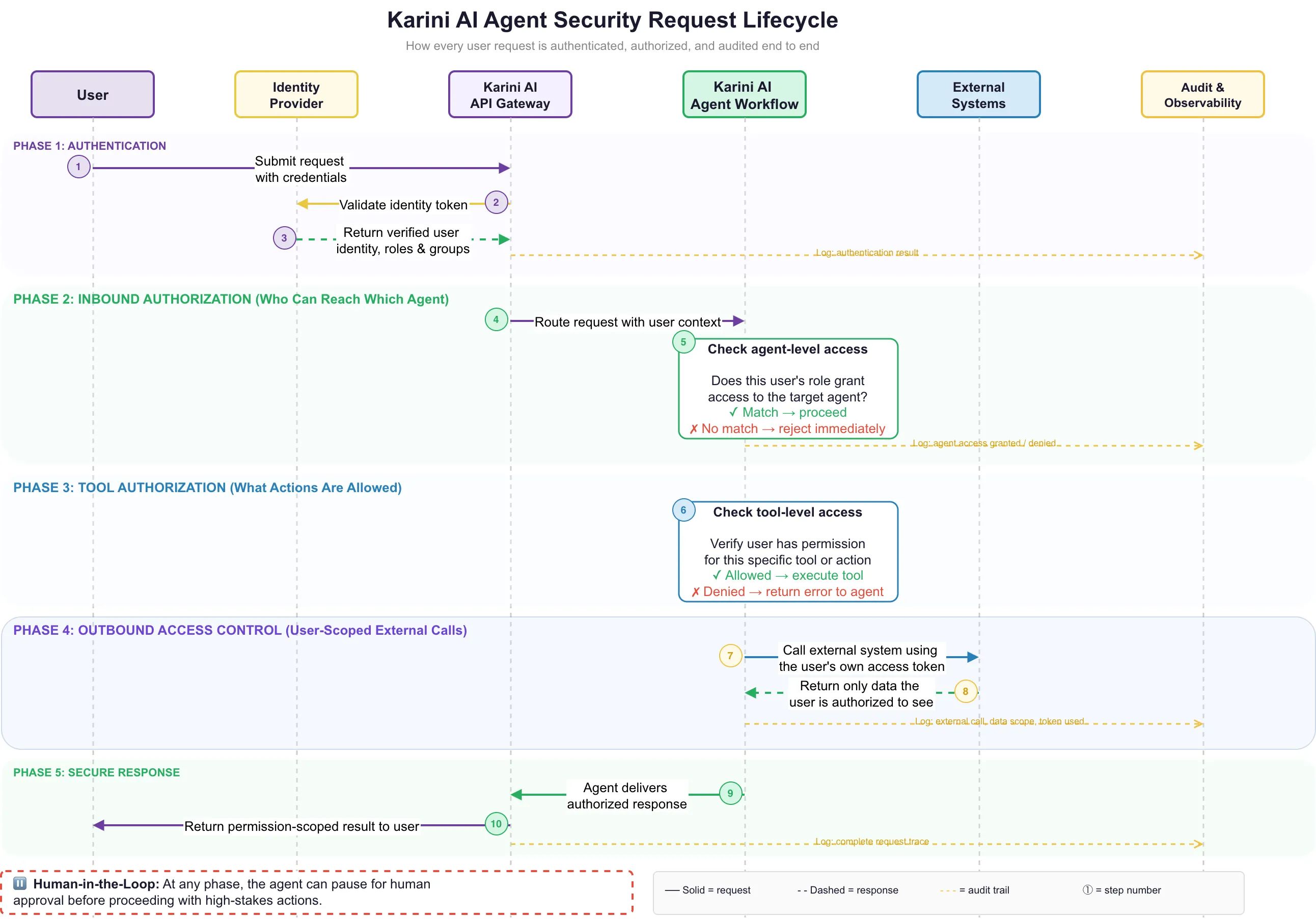

The Karini AI security architecture operates across three enforcement layers that work together to provide comprehensive protection for agent workflows.

| Layer | Enforcement |

|---|---|

| Identity Layer | JWT validation with JWKS caching, multi-provider SSO (Google, Azure AD, Okta, Ping or custom providers), user context extraction including roles, groups, and permissions |

| Agent & Tool Layer | Node-level authorization at graph construction (fail-fast), tool-level authorization at runtime (fail-graceful), group-based access control on every agent and tool |

| External Access Layer | OAuth2 Authorization Code + PKCE for outbound tool connections, per-user per-org token isolation, bearer token injection for MCP server calls |

Here is a Agent Security sequence diagram with the flow of events beginning with a user query application to the authorized data source or system

-

User submits a request with credentials: Karini supports both API key and session-based authentication, so agents can be invoked programmatically or from the browser.

-

Validate identity token: Karini validates JWTs against identity providers (Google, Azure AD, Okta) with signature verification and claim extraction.

-

Return verified user context: Karini extracts user ID, roles, groups, and permissions and propagates them through the entire workflow.

-

Route request with user context: Karini's API Gateway passes the authenticated user context into the Agent Workflow, ensuring no anonymous requests reach agents.

-

Check agent-level access: Karini enforces node-level authorization at workflow construction time. If the user's groups don't match the agent's requirements, the request is rejected before any computation begins.

-

Check tool-level access: Karini verifies per-tool permissions at runtime. Unauthorized tool calls return a structured error to the agent, which explains the denial to the user instead of crashing.

-

Call external system using the user's own access token: Karini injects the user's individual OAuth2 token into every outbound API and MCP server call, ensuring the agent never has broader access than the user.

-

Return only data the user is authorized to see: External systems enforce their own permissions using the user-scoped token, so data isolation is guaranteed end to end.

-

Agent delivers authorized response: Karini's agent assembles the response using only the data the user was permitted to access.

-

Return permission-scoped result to user: The final response is delivered through the API Gateway, with every step of the lifecycle captured in the audit trail.

Every authorization decision is logged with structured audit entries, and agents can pause at any step for human-in-the-loop approval on high-stakes actions.

See It in Action

To demonstrate Karini AI Agent Security in a real-world scenario, we have built end-to-end demonstrations across the platform’s core surfaces: Prompt Playground, Recipe Builder, and Copilot deployment.

Step 1. Build a Agent with External tools with outbound Authorization

Step 2. Build Agent Workflow with Inbound Authorization

Step 3. Test in Chat Assistant

Benefits of Karini AI Agent Security

Enterprise agent security is the foundation that determines whether an organization can deploy AI agents at scale without creating unacceptable risk. Karini AI’s security architecture delivers measurable advantages across compliance, operations, and business enablement.

| Benefit | Impact |

|---|---|

| Zero-Trust Agent Access | Every agent request is authenticated and authorized before execution. No implicit trust, no inherited permissions, no shortcut paths through the mesh. |

| Regulatory Readiness | Structured audit logs, per-decision traceability, and OpenTelemetry instrumentation provide the evidence trail that HIPAA, SOX, FedRAMP, and GDPR auditors require. |

| Tenant Isolation at Scale | Per-user, per-organization token scoping in Redis ensures complete data isolation across tenants, eliminating cross-tenant data leakage by design. |

| Reduced Attack Surface | Input sanitization, prompt injection guardrails, tool call validation, and credential encryption shrink the attack surface at every layer of the stack. |

| Operational Confidence | Fail-fast node authorization and fail-graceful tool authorization mean security failures are handled cleanly, not as crashes. Agents explain access denials to users rather than returning errors. |

| Frictionless User Experience | One-click OAuth2 connections, pre-execution batch checks, and real-time status indicators mean security does not slow users down or require manual token management. |

| Safe Multi-Agent Delegation | Authorization context propagates through the full agent hierarchy. Sub-agents inherit and enforce the invoking user's permissions, preventing privilege escalation through delegation. |

| Human Oversight Where It Matters | Interrupt-and-resume gates allow organizations to enforce human approval on high-stakes actions without breaking agent workflow continuity. |

From Agents That Execute to Agents You Can Trust

The enterprise AI conversation has reached an inflection point. Organizations are no longer asking whether AI agents can automate complex workflows. They are asking whether those agents can be trusted to operate within the boundaries that compliance, governance, and common sense demand.

The answer depends entirely on the security architecture underneath. An agent without authorization is an automation liability. An Agent Mesh without access control is a compliance incident waiting to scale.

Karini AI has built agent security as a first-class platform capability, not an afterthought bolted onto an existing framework. Inbound authorization verifies user identity and enforces role-based access before any agent logic executes. Outbound access control ensures that every external action an agent takes is bound by the invoking user’s actual permissions. Audit logging and observability provide the evidence trail that regulated industries require. And human-in-the-loop gates ensure that the most consequential decisions remain under human control.

This is the shift: from agents that execute to agents that execute responsibly, from open-access meshes to zero-trust agent networks, from opaque automation to fully auditable decision chains.

Enterprise-grade agent security is not the future. It is available today on the Karini AI platform.

FAQ

What is Agent Security and why does it matter for enterprise AI workflows?

Agent Security ensures that every AI agent in a multi-agent workflow authenticates user identity, enforces role-based access to agents and tools, and scopes outbound data access to each user's permissions. Without it, any user who can reach an Agent Mesh can potentially access every agent and every data source behind it, creating compliance violations and data leakage risks across departments.

What is the difference between inbound authorization and outbound access control?

Inbound authorization controls who can reach which agent. It verifies the user's identity, roles, and group memberships before allowing access to specific agents in the mesh. Outbound access control secures what the agent can touch on behalf of that user. When an agent calls external systems like CRMs, ERPs, or APIs, it uses the user's own OAuth2 token, ensuring the agent never sees or retrieves more data than the user is permitted to access directly.

How does Karini AI enforce security without slowing down users?

Karini surfaces OAuth2 tool connections as one-click flows directly in the copilot interface, with no manual token entry required. Pre-execution batch checks verify all required authorizations before a workflow begins, and real-time connection status indicators keep users informed. Agent-level access is checked at workflow construction time and tool-level access at runtime, so unauthorized requests are rejected instantly without wasting compute.

Does Karini AI Agent Security support compliance requirements like HIPAA, SOX, and FedRAMP? Yes. Every authorization decision generates a structured audit log entry capturing the user, resource, result, and context. Full observability traces span the entire request lifecycle from authentication through response delivery. Human-in-the-loop gates allow organizations to require human approval on sensitive actions. Together, these capabilities provide the evidence trail and oversight controls that regulated industries require.